From deployment to open standards: Arm advances AI infrastructure for the agentic era

As AI systems evolve from running models to orchestrating autonomous, agentic workflows, the requirements for infrastructure are fundamentally changing. Workloads are no longer defined by isolated inference tasks, but by thousands of coordinated interactions across models, tools and services. In this new environment, the CPU is becoming the control layer for AI – managing orchestration, data movement and system behavior across the stack.

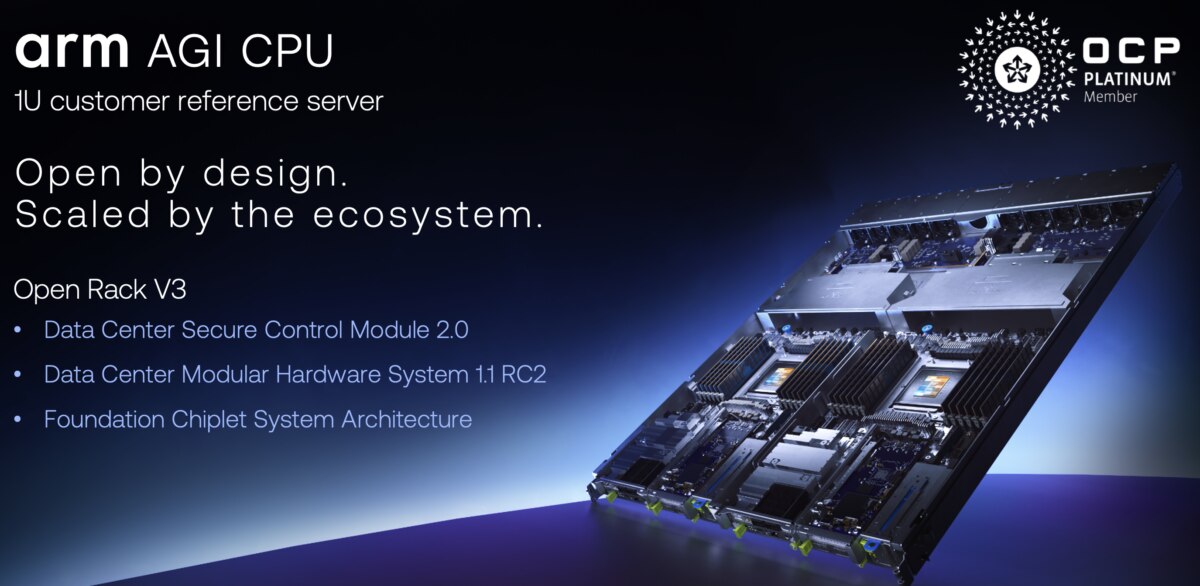

To address these emerging demands, Arm recently introduced the Arm AGI CPU, purpose-built for the next generation of AI infrastructure. The Arm AGI CPU emphasizes high core scalability, memory bandwidth, and system-level efficiency, enabling it to coordinate complex interactions across CPUs, GPUs and other accelerators. It is optimized for performance, consistency and interoperability across large-scale deployments.

At the 2026 OCP EMEA Summit, Arm is announcing that Verda, the European cloud provider, will deploy the Arm AGI CPU for agentic AI orchestration as part of its next-generation infrastructure, alongside NVIDIA GB300-based systems and upcoming NVIDIA Vera Rubin-based systems. This deployment reflects a broader industry shift toward tightly integrated CPU-accelerator architectures, where CPUs play a central role in enabling scalable, efficient AI systems.

At the same time, Arm is also extending its long-standing commitment to open, standards-based infrastructure through a set of contributions in progress to the Open Compute Project (OCP). Together, these efforts – real-world deployments and open ecosystem collaboration – highlight how AI infrastructure is evolving and how Arm is helping to define the compute foundation for this next phase.

Scaling agentic AI with Meta

Arm’s work on the AGI CPU is closely aligned with leading hyperscalers shaping the future of AI infrastructure, including lead partner and customer Meta. This collaboration reflects a shared focus on building scalable, open platforms capable of supporting increasingly complex AI workloads.

The challenges with AI systems go beyond raw compute performance. System-wide efficiency and interoperability are also critical to scaling workloads effectively. Arm and Meta are working together to advance infrastructure that can support these requirements with Arm AGI CPU to enable more efficient orchestration and deployment of agentic AI.

This collaboration underscores the broader industry shift, as hyperscalers move toward tightly integrated systems where CPUs play a central role in managing AI workflows. By collaborating on open architectures and system-level design principles, Arm and Meta are helping define the foundation for next-generation AI infrastructure.

Verda deployment: AI infrastructure in practice

Building on this momentum, Verda’s deployment of the Arm AGI CPU shows how next-generation AI systems are being built. By combining Arm-based CPU infrastructure with NVIDIA GB300 GPU platforms, Verda is enabling a tightly coupled architecture designed to support agentic AI workloads at scale.

In this model, accelerators deliver the performance required for model execution, while the CPU orchestrates workflows, manages data movement and coordinates system behavior across components. This balance is essential for agent-based systems, where performance depends not only on compute throughput, but on efficient coordination across the entire stack.

Verda’s adoption reflects a broader trend toward integrated, heterogeneous systems optimized for AI, where the CPU plays a central, strategic role.

Agentic AI is redefining infrastructure

Traditional AI pipelines are relatively linear: data in, inference out. Agentic systems are different. They plan, reason and act – often through continuous loops that span multiple models, services and decision points.

This shift is driving a step-change in infrastructure demands. Accelerators continue to execute model workloads and generate tokens, but CPUs are increasingly responsible for coordinating these activities across the system. As a result, CPU demand is growing not only in scale, but in importance.

As these systems expand, consistency across hardware platforms and system management becomes critical. Standardized architectures such as the Server Base System Architecture (SBSA) and Server Base Manageability Requirements (SBMR) help ensure that complex, multi-agent workloads can run reliably across diverse environments without requiring bespoke integration.

Scaling AI infrastructure through open standards

As AI systems grow in complexity, scaling them efficiently requires more than advances in silicon. It requires alignment across the ecosystem, spanning hardware, firmware, system design and deployment models.

Arm is contributing multiple spec contributions in progress to help OCP enable this alignment and reduce friction for partners building Arm-based AI infrastructure. These contributions span three key areas:

Day zero deployment readiness

Deploying infrastructure at scale requires confidence from the beginning. Arm is advancing its established system architecture specifications within OCP, including SBSA, SBMR and the Arm Data Center Architecture Compliance (ADAC) framework.

Together, these provide a consistent foundation for hardware platforms, system management and validation, which enables operating systems and applications to run unmodified across implementations. Complementary tooling for diagnostics, compliance testing and system validation further helps partners bring up systems more quickly and deploy with reduced operational risk.

Reference designs for faster adoption

To accelerate time from silicon to deployment, Arm is contributing reference server designs for Arm AGI CPU-based systems. These include server hardware specifications and firmware development frameworks that provide a production-ready foundation for partners.

By standardizing key elements of system design while preserving flexibility for differentiation, these contributions will help simplify development and enable faster, more efficient deployment across a range of use cases.

Enabling an open chiplet ecosystem

As AI infrastructure evolves, chiplet-based designs are becoming increasingly important for scaling performance and flexibility. Through its Foundation Chiplet System Architecture (FCSA) work with OCP and ecosystem partners, Arm is helping to enable a more open and interoperable chiplet ecosystem.

This approach supports modular system design and reduces integration complexity, allowing partners to more efficiently develop and deploy AI-optimized silicon platforms.

Ecosystem momentum

Arm’s work with OCP is part and parcel of a broader industry effort to align on open, scalable infrastructure for AI.

“As AI infrastructure scales, standardization across the stack becomes increasingly important to enable interoperability and efficiency,” said Paul Saab, software engineer, Meta. “Our collaboration with Arm reflects a shared focus on advancing open platforms that can support large-scale AI workloads.”

George Tchaparian, CEO, Open Compute Project, said, “OCP brings together a global community to accelerate innovation through open collaboration. Contributions across areas such as chiplets, system readiness and reference designs are key to enabling broader adoption of open AI infrastructure.”

And Ruben Bryon, Founder & CEO, Verda, noted, “At Verda, we’re operating a renewable-powered AI cloud built for ML teams. By pairing Arm AGI CPU with our NVIDIA GB300 and upcoming VR200 fleet, we aim to deliver a fully Arm-native stack from orchestration to inference, giving customers the density and efficiency that agentic AI demands at scale.”

Building the foundation for the next phase of AI

As AI infrastructure evolves, success will depend not only on performance, but on the ability to deploy, scale and interoperate across increasingly complex systems. Open standards and ecosystem collaboration will be critical to enabling this next phase.

Arm’s approach – combining high-performance compute with open, standardized system foundations – establishes the CPU as a central layer in AI infrastructure. Through real-world deployments such as Verda and continued collaboration within OCP, Arm is working with partners across the industry to build the foundation for scalable, production-ready AI systems.

Any re-use permitted for informational and non-commercial or personal use only.