What is agentic AI and why its reshaping AI from cloud to edge

Power demand for AI is rising rapidly as workloads continue to scale in both volume and complexity. Today, the real constraint is not raw compute, but the ability to deliver efficient compute within fixed limits on power, cooling, and physical space.

At the same time, the nature of AI workloads is changing. Systems are evolving from short, user-driven interactions to continuous, multi-step processes that generate and manage work autonomously. Meeting this shift requires technologies designed to maximize performance per watt and maintain consistent performance under continuous loads, rather than optimizing to handle intermittent spikes.

That change in workload behaviour is being increasingly driven by agentic AI.

Unlike traditional inference, agentic AI does not just generate tokens but coordinates a sequence of decisions, tool calls, retrieval steps, memory accesses and model interactions. That makes orchestration a first-order requirement, highlighting the importance of the CPU as the system component that manages and sustains those flows.

What is agentic AI

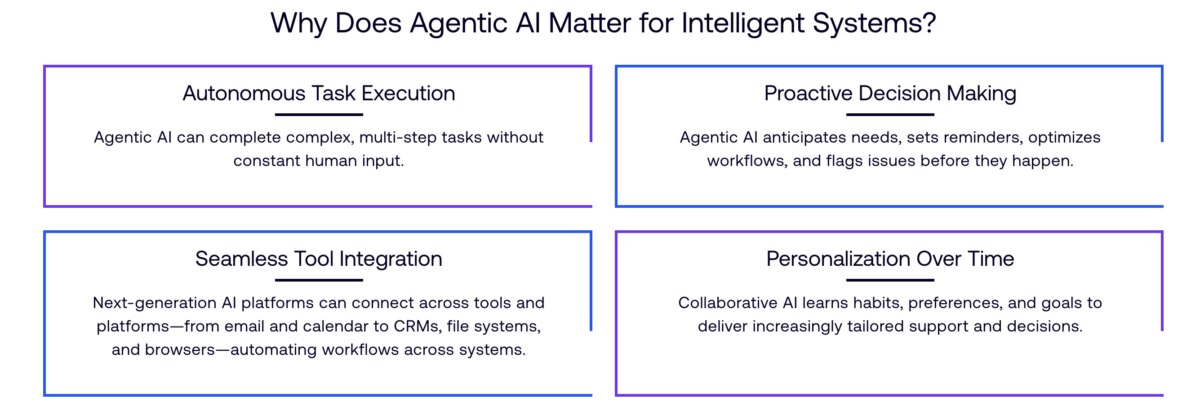

Agentic AI powers a new class of systems that can plan, execute and adapt tasks autonomously, with minimal human input. Instead of responding to a single prompt, these systems break tasks into steps, interact with tools and services, and continuously adapt as they run.

For example, an agentic AI system can take a high-level request like “prepare a market analysis report”, gather data from multiple sources, run analysis, generate a report, and share it – all without requiring step-by-step human instruction.

This shows a clear change in how AI operates. Traditional systems were largely reactive where a user submits a prompt, the model generates a response, and the interaction ends. Agentic AI systems, by contrast, are persistent. They run workflows, coordinate processes, and operate beyond a single interaction.

As these systems coordinate tasks, interact with multiple models and make decisions in real time, system activity increases faster than the pace of direct human interaction. The result is a step-change in system load, with workloads that are continuous, concurrent and significantly more demanding to run.

How agentic AI systems work

Agentic AI systems rely on a sequence of steps, such as planning, orchestration, learning and taking action. Each step introduces dependencies that must be resolved in the correct order, often across multiple services.

That coordination layer is increasingly critical. In agentic systems, the CPU is not simply feeding accelerators; it acts as the orchestrator for tool use, memory access, service coordination, scheduling and control-flow decisions across the workflow.

As the number of concurrent tasks increases, these dependencies begin to expose limitations in how systems are designed. Workloads can become unevenly distributed, with some resources underutilized while others are overly saturated. Memory and I/O can become points of contention and slow overall execution even when additional compute is available.

This creates a situation where adding more threads or increasing workload volume does not always translate to better system performance. Instead, inefficiencies accumulate across the system and reduce throughput while increasing the cost of running each task.

What this means for designing AI infrastructure

The rise of agentic AI has not only changed how systems are built, but also how the infrastructure is designed to support them. There is now a greater emphasis on coordination, sustained throughput and efficient resource management and utilization, as workloads become increasingly defined by continuous processes that must run reliably over time.

This means less emphasis on peak performance in individual components, and more on how those components work together as a system. Performance is no longer just about how fast a task can be completed, but how consistently tasks can be executed across many concurrent workflows within available power and capacity limits. Compute, memory and I/O must remain balanced to ensure that performance can scale without introducing bottlenecks.

Agentic AI also changes how efficiency is measured, shifting the focus to how much useful work a system can sustain per watt and per rack, while maintaining consistent latency across many concurrent workflows. This extends efficiency beyond model inference to a broader systems challenge.

Arm’s first-ever production silicon product the Arm AGI CPU – an Arm-designed CPU for AI data centers – is designed to address these challenges for the next generation of AI infrastructure. By designing the system so that compute, memory, and I/O scale together, it ensures each task has the resources it needs to run efficiently to enable predictable performance across many concurrent, orchestration-heavy workloads within strict power envelopes.

This supports more consistent execution across complex workflows, helping systems maintain performance without relying on excess capacity or compensating for imbalances elsewhere in the stack. As more agentic systems move into production, the ability to sustain performance while managing resource constraints will determine how effectively they can be deployed at scale.

Extending agentic AI from cloud to edge

Agentic AI workloads are also starting to run beyond the cloud and data center, with parts of their execution moving closer to the user on the device, enabling decisions to be made quickly, privately, and with local context.

For example, when booking a holiday, if a user asks to “plan a week-long trip to Italy in June”, the agent checks flights, compares prices, selects accommodation, plans an itinerary and completes bookings. Some steps, such as large-scale data retrieval may run in the cloud, but other steps, such as managing user preferences or keeping track of the process, can run on the device to avoid repeated delays.

This creates a distributed process where tasks are split between cloud and edge, with the overall aim being that each step in the agentic AI process runs reliably anywhere. Again, this is where the role of the CPU becomes critical, as it not only coordinates workflows across environments but also orchestrates between on-device compute elements such as GPUs and NPUs. This ensures the task run on the most appropriate component and enables more efficient execution of AI workloads within device constraints.

Building for the next phase of AI

Supporting agentic AI workloads is not just about increasing capacity, but designing systems that can operate efficiently under sustained, real-world conditions.

Arm’s approach to this new era of computing from cloud to edge reflects this shift. By focusing on how compute is delivered at scale, across different environments and workloads, Arm provides the foundation for running the next generation of agentic AI systems.

Any re-use permitted for informational and non-commercial or personal use only.