What Arm-based innovations happened in April 2026?

From industry conversations and ecosystem milestones to new technical resources, April offered a broad look at where Arm innovation is showing up. This month’s roundup spans momentum across physical AI (including robotics and AI-defined and autonomous vehicles) and cloud, developer tooling, and research-driven advances that all reflect a bigger shift: more intelligence moving into real-world systems, and more demand for the efficient compute needed to support it.

IBM and Arm explore dual-architecture hardware for the next era of enterprise AI

Dual-architecture hardware is an emerging approach to enterprise infrastructure that allows Arm-based software environments to operate alongside established mission-critical systems, helping organizations adopt AI and data-intensive workloads without forcing disruptive platform changes.

This IBM-Arm collaboration expands infrastructure choice while preserving the reliability, security, and availability enterprises expect in core environments such as IBM Z and LinuxONE. Arm brings its power-efficient architecture, workload enablement expertise, and broad software ecosystem into these environments, giving enterprises more flexibility to deploy and scale modern workloads.

Signal65 evaluates Arm-powered NVIDIA DGX Spark for local AI development

NVIDIA DGX Spark is a compact, Arm-powered AI workstation built on the GB10 Grace Blackwell Superchip, bringing the full NVIDIA AI software stack to developers’ desks for local AI development. Signal65’s evaluation showed that DGX Spark delivered up to 41% faster CPU rendering, 50% higher memory bandwidth, and 3.2x faster AI prompt processing than comparable x86 small-form-factor workstations.

With Arm Cortex-X925 and Cortex-A725 CPUs, unified memory, and native support for tools like CUDA, vLLM, NeMo, Docker, and Kubernetes, the platform helps developers prototype, fine-tune, and run larger AI workloads locally, while maintaining a path from desktop to cloud and data center deployments.

How Arm and Epic Games are supporting smoother mobile game experiences

Unreal Engine is a leading development platform for mobile games, where performance tuning increasingly means optimizing for sustained FPS, thermals, battery life, and device fragmentation—not just peak visuals.

Following Arm’s Developer Summit at the GDC Festival of Gaming 2026, Epic Games shared practical guidance for Unreal Engine developers on using continuous optimization, automated testing, and profiling to deliver smoother experiences across real-world mobile hardware. Arm tools such as Streamline, part of Arm Performance Studio, give developers deeper visibility into Arm-based devices, helping teams identify CPU and GPU bottlenecks and make targeted optimizations with more confidence.

Why the sim-to-real gap is becoming a defining issue for physical AI

The sim-to-real gap is the difference between how a robot or AI system performs in simulation and how it behaves in the physical world, where noisy sensor data, unpredictable conditions and real-time constraints add complexity. Closing it requires more than better models; it also depends on compute that can process sensor data, run AI workloads and respond within strict power and thermal limits.

The Arm compute platform support intelligence across the stack, from sensor-level processing to higher-performance AI, helping robotics and physical AI developers balance responsiveness, scalability and efficiency on the path to deployment. Suraj Gajendra, VP Product and Solutions, Physical AI Business Unit, Arm, joined leaders from Cadence, NVIDIA, DynaRobotics and Rivian to discuss how better simulation, real-world data loops and rigorous validation can make physical AI reliable beyond controlled environments.

Shaping safer, software-defined experiences with JLR and Codethink

Software-driven and AI-defined vehicles are becoming the foundation for the next generation of premium electric vehicle (EV) experiences, where intelligence, safety and adaptability increasingly depend on the underlying compute platform.

Through the DRIVE35 Collaborate programme, Arm is working with JLR and Codethink to help advance AI-driven EV architectures built for greater reliability, faster software integration and long-term scalability. Built on Arm’s automotive compute platform, the project highlights how Arm technology can help accelerate the shift to safer, more flexible and more efficient vehicle development.

How Uber is using Arm-based cloud compute to speed real-time trips and deliveries

AWS Graviton4 is a cloud processor built on the Arm Neoverse platform, designed to deliver the performance and efficiency needed for large-scale, latency-sensitive workloads. Uber uses Graviton4 to help match riders, drivers and deliveries in milliseconds, showing how Arm-based infrastructure can improve responsiveness during demand spikes, while also helping reduce energy use and optimize costs.

Moving more “Trip Serving” workloads to AWS gives Uber the flexibility to scale these real-time services faster and more reliably. It is a strong example of how Arm-based cloud compute is enabling production AI and real-time decision-making at a global scale.

Why Arm’s investment in Wayve matters for embodied AI in automotive

Embodied AI for autonomous driving uses end-to-end AI models and in-vehicle compute to help cars understand and respond to real-world driving conditions more adaptively. That is what makes Wayve’s $60 million Series D extension significant. With backing from Arm, AMD and Qualcomm Ventures, the company is expanding its AI Driver deployment across more vehicle platforms and compute architectures.

For Arm, this highlights the growing need for scalable, efficient compute in autonomous vehicles, where real-time performance, power efficiency and integration flexibility are critical. By providing the compute foundation for these systems, Arm is supporting a faster, more flexible path for automakers to bring autonomous driving capabilities to market at scale.

How developers can assess Hugging Face Spaces for Arm64 readiness

Hugging Face Spaces have become an important path for testing and deploying AI applications, but many were built and validated only for x86 environments, which created a hidden friction for teams targeting Arm64 platforms. This blog shows how Docker MCP Toolkit and the Arm MCP Server can analyze a Hugging Face Space for Arm64 readiness in minutes and help developers quickly identify issues, such as missing Arm64 container support and architecture-locked dependencies, before they derail a build.

How Keil Studio for GitHub Codespaces is simplifying cloud-based embedded development

Keil Studio for GitHub Codespaces is a cloud-based embedded development environment that brings Arm development tools into the browser through GitHub Codespaces. That makes embedded work faster to start, easier to standardize, and better suited for collaboration across distributed teams.

In this Arm Community blog, Christopher Seidl, Director Product Management Embedded Tools, explains how the platform helps developers build, debug, and work together on Arm projects without the usual local setup burden.

Enabling AI on medical-grade wearables

Advanced medical-grade wearables are moving beyond basic activity tracking into clinically relevant, continuous health monitoring, thanks to ultra-thin sensors, efficient AI, and low-power Arm-based compute.

In this Arm Community Blog, Becky Ellis, University Research Enablement Senior Manager, explores how researchers at the University of Texas at Austin are combining e-tattoo sensors with Arm-enabled weightless neural networks to process vital signals on-device with high accuracy and far lower energy demands. The result is a promising path toward longer-lasting, real-time monitoring of cardiovascular and cognitive states without relying on bulky batteries or constant offline analysis.

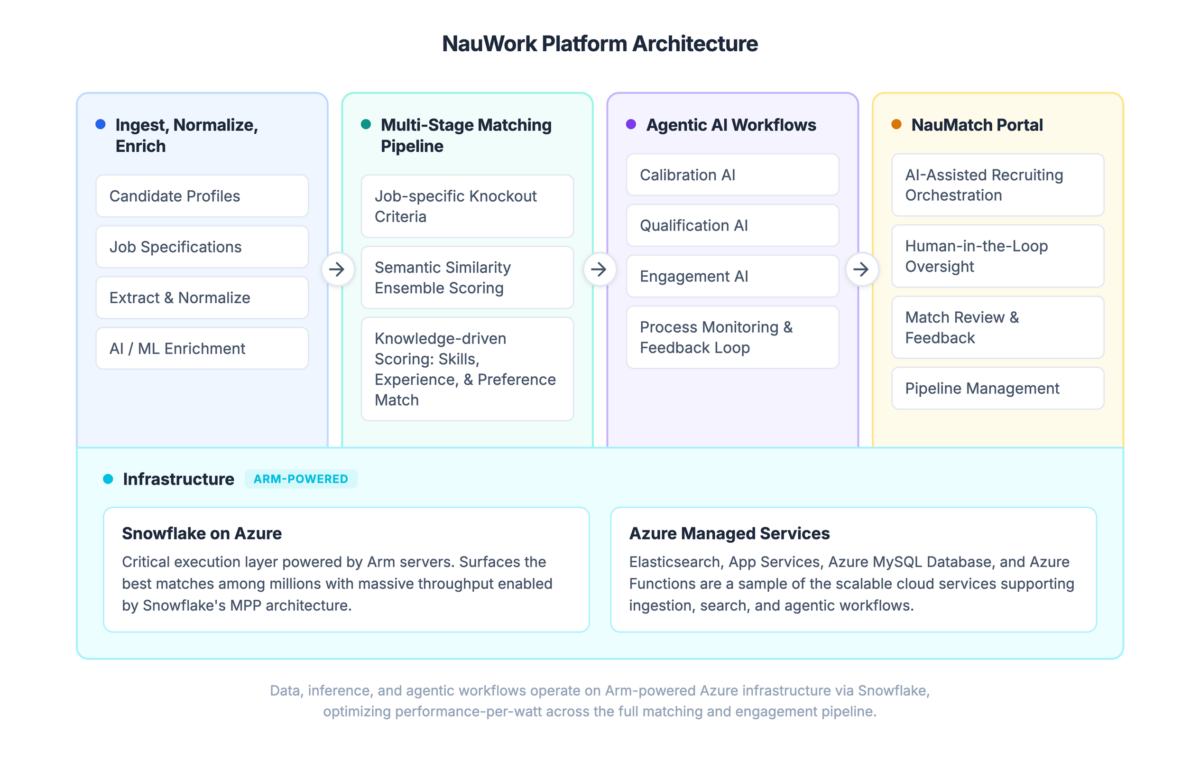

Arm and NauWork are bringing agentic AI to semiconductor hiring

Agentic AI uses autonomous software agents to manage complex tasks, making it well suited to high-signal workflows, such as semiconductor hiring.

In this Arm Community blog, Ben Harlander, Director of Data Science + ML at NauWork, explains how Arm and NauWork are applying that model to improve talent matching, accelerate candidate screening, and help companies hire specialized engineers more efficiently. For semiconductor businesses, where hiring delays can directly affect product innovation and execution, that means a faster, more scalable path to building critical teams.

How generative AI is making Arm architecture easier to explore

Generative AI tools can help developers learn faster, work more efficiently, and unlock new ways to engage with complex technical information.

In this Arm Community blog, Jade Alglave, Lead Formal Architect and Fellow at Arm, introduces “The Architecture Speaks,” an experimental generative AI tool designed to make Arm architecture concepts easier to understand by helping readers explore the Arm Architecture Reference Manual more intuitively. This gives developers a faster, more accessible way to work with highly detailed technical documentation without losing the depth and accuracy of the original source.

Any re-use permitted for informational and non-commercial or personal use only.