Arm’s global platform evolution to meet growing computing and ecosystem demands

AI is entering a new phase, shifting from experimentation to continuous, large-scale deployment of systems that can reason, plan, and act.

The rise of agentic AI systems is accelerating this shift in computing, increasing the scale, complexity, and persistence of AI workloads, and placing new demands on the infrastructure that supports them.

Across regions, the constraints are clear. Power availability limits how much AI infrastructure can be deployed. Physical space is restricting expansion in existing data centers. As AI systems scale, the complexity of coordinating compute across CPUs, accelerators, and memory is increasing significantly.

This represents an inflection point – and it is reshaping what the ecosystem needs from compute platforms.

A platform evolution that meets a changing market

For more than 35 years, Arm has enabled the global compute ecosystem by providing the platform that powers infrastructure and billions of devices across markets. Our model has focused on designing the architecture, licensing it to partners, and enabling them to build custom solutions optimized for their needs. The flexibility Arm provides through its IP licensing business has allowed companies to make the customizations they need in computing power, performance, and size.

As AI infrastructure evolves, Arm’s model is expanding.

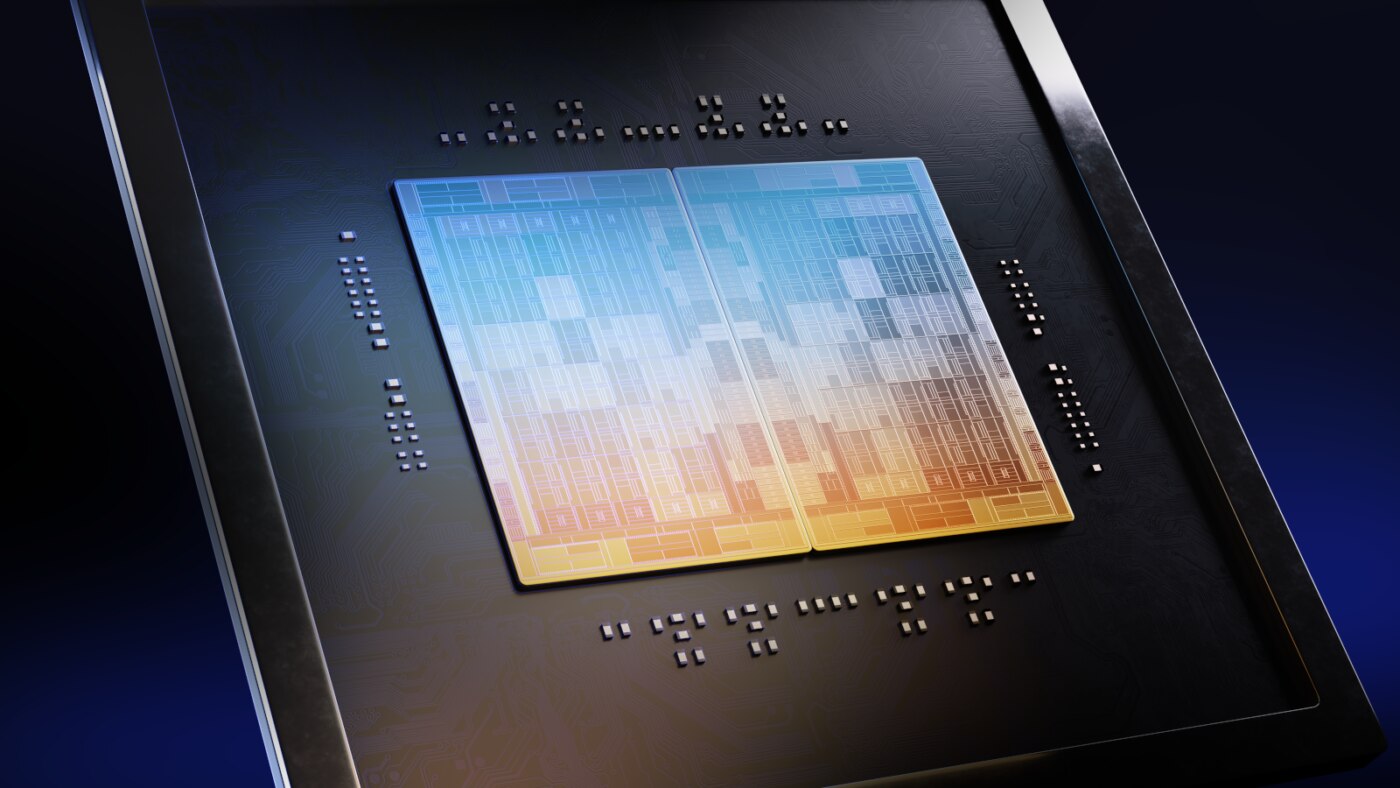

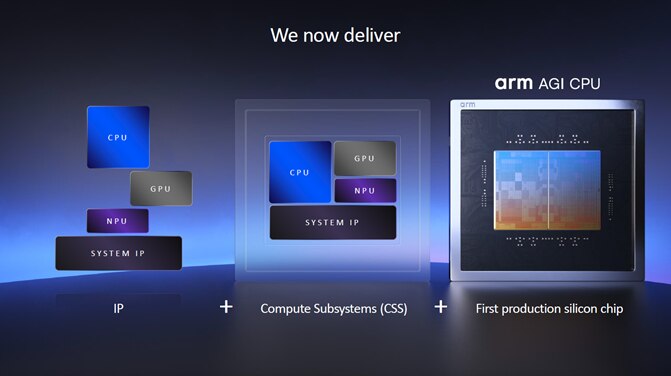

With the introduction of the Arm AGI CPU – our first Arm-designed data center CPU – we are extending Arm’s offerings into production silicon for the first time. This builds on our longstanding IP and Compute Subsystems (CSS) offerings to provide partners with the broadest set of options for deploying Arm-based solutions. Meta serves as lead partner and co-developer, working alongside Arm to optimize the Arm AGI CPU for large-scale AI infrastructure and deploying it alongside its own custom silicon.

Arm’s evolution is driven by clear demand from across the ecosystem. Partners are not looking for a single approach to compute. They are asking for flexibility: multiple pathways they can use in combination depending on their workloads, timelines, and scale. With the introduction of the Arm AGI CPU, Arm is assuming more of the significant engineering work necessary to develop leading edge systems for AI infrastructure and providing another pathway for partners to develop customized AI solutions. This production silicon will allow partners to focus their limited engineering resources on complementary AI chips and systems, accelerating development and innovation.

For Arm, this means we now offer:

- Custom IP for differentiated innovation

- Pre-integrated CSS to accelerate development

- Production silicon that can be deployed directly

This is not a shift from Arm’s partner model. It is an expansion of it. These pathways are designed to be interoperable, enabling partners to build on Arm in the way that best fits their needs and demands.

The role of CPUs

As AI systems evolve, so does the role of the CPU.

While accelerators remain essential for training and executing AI models, CPUs play a critical role in enabling these systems to operate at scale – coordinating workloads, managing data and ensuring systems run efficiently.

As AI becomes more distributed and continuously running, coordination demands are increasing significantly. Agentic systems generate more interactions, greater data movement, and require sustained performance over time.

Scaling AI is no longer just about increasing accelerators, it also depends on CPU for orchestration, control, and system-level operations. This shift is driving demand for processors designed to deliver performance and efficiency at scale.

Efficiency is becoming the limiting factor

However, the ability to scale AI is increasingly constrained by infrastructure realities.

In many regions, power is emerging as a primary bottleneck, with grid capacity and new facilities taking years to come online. This makes efficiency a strategic requirement.

Improving performance within existing power and space constraints allows organizations to deploy more compute without waiting for new infrastructure. It can accelerate timelines, reduce overall costs, reduce pressure on energy systems, and expand access to AI across a broader range of markets.

Similarly improving performance per rack allows more capability to be delivered within existing facilities, reducing the need for additional physical expansion.

As AI systems become more complex, coordination between CPUs and accelerators becomes essential to overall efficiency.

The Arm AGI CPU is designed with these constraints in mind – delivering the performance, scale, and efficiency required for AI infrastructure – enabling more than 2x performance per rack compared to x86 CPU-based racks. The same power delivery but twice the performance.

A more flexible and resilient ecosystem

These challenges are global. So is the response.

Across markets, policymakers and industry leaders are working to balance AI innovation with the realities of energy systems, infrastructure constraints, and long-term economic growth.

Flexibility and resilience across the compute ecosystem are increasingly important.

Providing multiple pathways to building and deploying infrastructure can:

- Support innovation and a diverse ecosystem

- Lower barriers to participation

- Reduce concentration risk across technology approaches and providers

- Strengthen resilience across supply chains.

By expanding the Arm compute platform, from IP to CSS to silicon, we are contributing to a more adaptable and diverse ecosystem for AI infrastructure.

Arm’s expansion is supported by a broad cross-section of the global ecosystem – from hyperscalers and cloud providers to semiconductor and infrastructure partners – reflecting a shared recognition that AI infrastructure requires more flexible and diverse approaches to compute.

Building for the next era

The next phase of AI will be defined by how effectively it can be deployed.

The infrastructure decisions made today will shape how quickly AI can scale and how broadly it can be accessed.

By expanding our platform, we’re broadening the ways we support our partners and the global ecosystem in the agentic AI era. This is how we’ll enable the next phase of AI – efficiently, flexibly and at a global scale.

Any re-use permitted for informational and non-commercial or personal use only.