GDC 2026: How Neural Graphics, AI, and Arm Tools Are Shaping Mobile Game Development

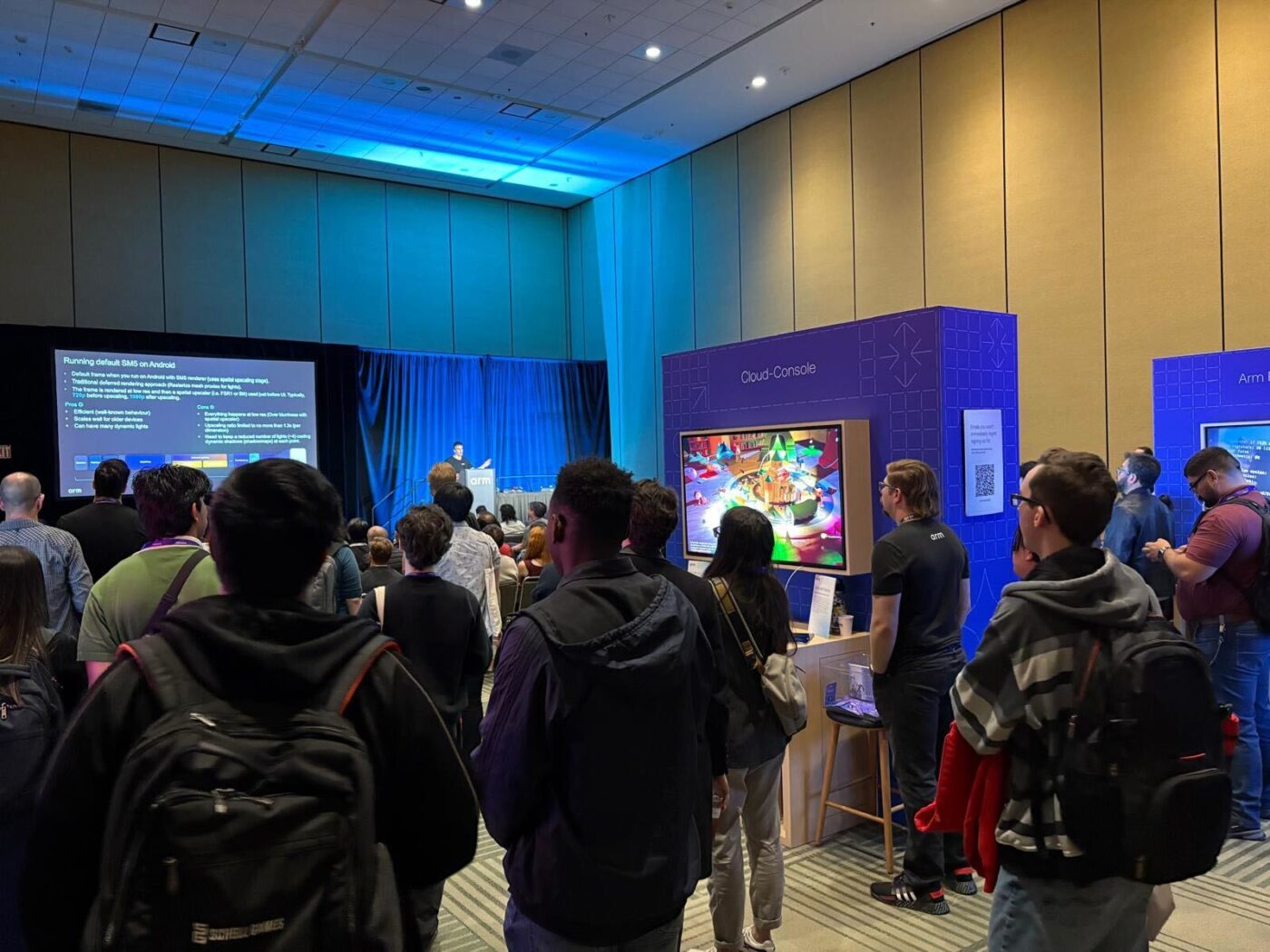

If you spent time at GDC Festival of Gaming 2026, you probably noticed a familiar theme running through many of the conversations around mobile graphics: How far can visual ambition really go on mobile without pushing devices past their limits?

For mobile game developers, graphics engineers, technical artists, and engine teams, that question now sits at the center of production planning. Studios want PC-class visuals, smoother frame rates, richer lighting, and more responsive AI-driven gameplay, but they still need to work within mobile GPU, power, battery, and thermal limits.

At Arm’s developer summit at GDC, that question was tackled repeatedly in discussions around neural graphics, mobile game performance optimization, Neural Frame Rate Upscaling, Vulkan ML, Unreal Engine workflows, Unity performance tuning, and AI-powered gameplay systems.

The goal is to deliver games with enhanced visual quality that run smoothly and efficiently on mobile devices.

Key takeaways for mobile game developers

- Neural graphics are moving from research into production-ready mobile game workflows.

- Neural Frame Rate Upscaling can help improve smoothness in mobile games.

- AI-powered NPCs and gameplay systems are becoming easier to integrate into engines like Godot.

- Foundational mobile GPU optimization remains essential for frame rate, battery life, and thermal stability.

- Arm Performance Studio gives developers visibility into GPU bottlenecks across Arm-based devices.

How neural graphics are moving into mobile game development workflows

A clear theme from Arm’s developer summit was how to move neural graphics from experimentation into practical workflows.

One example was the announcement that Sumo Digital is collaborating with Arm to advance PC-class gaming on mobile using Arm neural technology. This collaboration helps accelerate the shift to Arm neural technology, adding dedicated neural accelerators to future Arm GPUs – from research to production – giving developers an opportunity to experiment early with AI-driven high-fidelity graphics techniques on mobile.

As Chris Bergey, EVP, Edge AI Business Unit, Arm, said: “With Sumo Digital, we’re doing important work to ease the on-ramp for developers so they can push visual quality even further on mobile.”

Since announcing our neural technology, Arm has focused on turning neural techniques from experimental ideas into practical capabilities. At Arm’s developer summit, we introduced Neural Frame Rate Upscaling (NFRU), a neural graphics technique that generates frames to improve smoothness in mobile games. Through the Early Access Program, developers can begin using NFRU within the Neural Graphics Development Kit ahead of its general availability.

Start building with Arm neural graphics technology through the Early Access Program

In Arm’s developer summit session – “Co-creating possibility: The future of mobile gaming” – Sumo Digital demonstrated how neural techniques can be applied today in a production quality reference game environment and then brought into a live Unreal Engine workflow. For developers, the value is in how these neural graphics techniques can be implemented inside real game-engine workflows, including:

- How neural frame generation interacts with motion vectors and optical flow;

- How it coexists with ray-traced lighting; and

- How those systems can be structured to remain power-efficient on mobile.

In another session, “Neural Graphics in practice: Arm’s SDK for next-gen game development,” Arm presented our neural technology stack as a developer-ready pathway for bringing neural rendering techniques into real-world mobile game development. The session highlighted various features in the Neural Graphics Development Kit – including Arm’s ML extension for Vulkan, the Neural Graphics SDK for game engines, and ready-to-use Unreal Engine plugins – that act as a practical route for integrating neural techniques into shipping workflows without needing a bespoke research stack. Together, these tools create a path for integrating neural rendering, frame generation, and AI-assisted graphics features into mobile games without requiring every studio to build a custom research stack.

Two other very well-attended sessions at Arm’s developer summit reinforced similar themes around graphics innovation and early experimentation.

A popular talk from Infold Games showed how the studio deployed advanced real-time global illumination in Love and Deepspace. The session showed how techniques such as surfel-based lighting and radiance cascades can bring richer lighting and visual depth to mobile games while still working within mobile performance constraints.

Meanwhile, Enduring Games focused on the benefits of gaining early access to emerging technologies. The talk highlighted how experimenting with new tools and capabilities earlier in development can help studios refine their pipelines and stay ahead as graphics and gameplay expectations continue to evolve.

Start building with the world’s first open Neural Graphics Development Kit

Integrating AI-powered NPCs and gameplay systems into game development pipelines

Another key theme to emerge from Arm’s developer summit was how AI is being integrated directly into development workflows to enable more interactive mobile gaming experiences.

That idea was explored during a session led by Arm’s Kieran Hejmadi, which attracted a full house at Arm’s developer summit. The talk walked through a complex machine learning (ML) pipeline integrated into the Godot engine, built entirely with open-source models and community-maintained plugins. The session showed how large language models (LLMs) can power more dynamic non player character (NPC) interactions without relying on proprietary infrastructure.

The most important takeaway was accessibility: AI gameplay experimentation is no longer limited to large studios with proprietary infrastructure. For example, a team of students successfully assembled the system and deployed it to create novel gameplay scenarios driven by LLM-powered dialogue and decision-making.

The key takeaway is that AI-driven gameplay systems are increasingly accessible and easier to experiment with and integrate within existing development pipelines.

Mobile game performance optimization: why profiling still matters

One of the key takeaways from Arm’s developer summit was that mobile performance challenges are often easy to miss until they show up on the device.

As device capabilities improve, game studios naturally aim to design for newer specifications with richer effects, denser scenes, and greater on-screen activity. But visual expectations are rising faster than efficiency gains, and on mobile, power and thermal constraints still define what’s possible.

This matters because hitting thermal limits leads to frames dropping, throttling, and battery draining. These are visible to players, directly impacting their gaming experience.

For mobile game developers, optimization is not only about achieving a target frame rate. It is also about sustaining that frame rate over time, reducing GPU bottlenecks, avoiding thermal throttling, and preserving battery life across a wide range of Arm-based mobile devices.

This is where Arm’s Performance Studio makes a difference. As Arm Developer Evangelist John French explained in his session, “Performance tuning and upscaling for mobile development in Unity,” Performance Studio is a comprehensive suite of tools for profiling Arm-based hardware. Featuring Frame Advisor, Streamline, RenderDoc, and Mali Offline Compiler, the suite provides deep visibility into GPU behavior, making it easier to identify performance issues, isolate bottlenecks, and apply targeted optimizations confidently. Developers can use these tools to analyze rendering workloads, understand GPU behavior, and make informed decisions about visual quality, upscaling, and performance tradeoffs.

Download Arm Performance Studio

Start building with Arm neural graphics and mobile game optimization tools

The conversations at Arm’s developer summit at GDC 2026 made one thing clear: the next phase of mobile gaming will be defined by the smarter use of compute across hardware and development workflows.

Neural graphics techniques are moving from research into real workflows, AI is becoming easier to integrate directly into gameplay systems, and developers now have more powerful tools to understand and optimize how their games run on mobile hardware.

Together, these developments are helping game studios push visual ambition further, while maintaining the efficiency and stability that mobile devices demand. For developers, that means creating richer worlds, more responsive characters, and smoother experiences without compromising performance or battery life.

For mobile game developers, the opportunity is clear: start testing neural graphics, NFRU, AI gameplay workflows, and Arm profiling tools early so performance, efficiency, and visual quality can be considered together from the beginning of production.

Now is the time for developers to start experimenting with neural graphics through the Neural Graphics Development Kit and Early Access Program, laying the groundwork to integrate advanced AI-driven upscaling and frame-generation techniques into game development pipelines.

FAQs

What were the top mobile gaming trends from GDC 2026?

The top mobile gaming trends from GDC 2026 included neural graphics, AI-powered gameplay systems, mobile GPU optimization, frame generation, upscaling, and developer tools that help studios balance visual quality with power, battery, and thermal efficiency.

What is Arm Neural Frame Rate Upscaling?

Arm Neural Frame Rate Upscaling, or NFRU, is a neural graphics technique that generates frames to improve smoothness in mobile games. Developers can begin experimenting with NFRU through Arm’s Neural Graphics Development Kit and Early Access Program.

How can developers optimize mobile game performance on Arm-based devices?

Developers can use Arm Performance Studio, including tools such as Frame Advisor, Streamline, RenderDoc, and Mali Offline Compiler, to profile Arm-based hardware, identify GPU bottlenecks, and apply targeted mobile rendering optimizations.

How are LLMs being used in game development?

Large language models can support more dynamic NPC dialogue and decision-making. At Arm’s developer summit, a Godot-based machine learning pipeline showed how open-source models and community plugins can be used to create AI-powered gameplay scenarios.

Why do neural graphics matter for mobile games?

Neural graphics can help mobile game developers improve image quality, frame rate, and rendering efficiency while staying within the power, battery, and thermal constraints of mobile devices.

Explore the early access program

Start building with Arm Neural Graphics Technology before anyone else

Any re-use permitted for informational and non-commercial or personal use only.