A comprehensive guide to understanding Arm Neoverse

A decade ago, cloud infrastructure was dominated by web applications and enterprise workloads with relatively predictable performance and power profiles. Today, it must scale artificial intelligence (AI) workloads, secure multi-tenant environments, and distribute compute beyond the data center and closer to where data is created.

Cloud platforms now operate across global regions where AI workloads demand greater memory bandwidth and sustained performance. At the same time, distributed systems push compute closer to users and machines, while operators face mounting pressure to improve performance-per-watt, strengthen architectural security, and shorten time to deployment.

Infrastructure has become increasingly distributed, software-defined, and AI-enabled and must deliver high performance while scaling efficiently within fixed power and cost constraints.

And that is exactly what the Arm Neoverse was built for.

What is Arm Neoverse?

Arm Neoverse features a family of infrastructure-class CPU cores built on the Arm architecture and optimized for cloud computing, high-performance computing (HPC), AI and machine learning workloads, and edge deployments. These cores form a scalable foundation for building:

- Cloud data center processors

- AI head node CPUs

- Energy-efficient server platforms

- Custom infrastructure silicon for hyperscale environments

Unlike processors that are primarily designed for end-user devices, Arm Neoverse CPUs are engineered for high core scalability across cloud servers and offer a sustained throughput for demanding workloads, strong performance-per-watt in data centers, and large memory bandwidth for AI and HPC applications. They are built for platform-level integration and multi-tenant security in shared environments.

In large-scale deployments for instance, performance improvements that appear incremental on paper translate into higher workload density across racks. Higher instructions per cycle, stronger memory bandwidth, and scalable core designs increase the amount of compute delivered per rack within a fixed power budget. This enables more cloud services, containers, and AI workloads per node without bottlenecks.

It’s this alignment between architectural design and infrastructure reality that makes Arm Neoverse unique.

The Arm Neoverse portfolio: V-Series and N-Series

To understand how Arm Neoverse scales across modern infrastructure, it helps to look at the portfolio side by side. Each core is built on the same architectural foundation, but optimized for a different balance of performance, efficiency, scalability, and deployment requirements.

| Core | Architecture | Performance profile | Quantified gains | Key difference | Optimized for |

|---|---|---|---|---|---|

| Neoverse V3 | Armv9 | Maximum performance for cloud, HPC, ML | Double-digit performance uplift over V2 (cloud + ML workloads) | First Neoverse core with Arm Confidential Computing Architecture (CCA) support | AI-driven data centers, hyperscale cloud, secure multi-tenant workloads |

| Neoverse V2 | Armv9 | High-performance cloud / HPC / ML | Up to 2× performance vs Neoverse V1 (cloud + ML workloads) | Includes Memory Tagging Extensions (MTE) and designed for scalable infrastructure platforms along with Scalable Vector Extension 2 (SVE2) | Hyperscale cloud, HPC clusters |

| Neoverse V1 | Armv8.4-A | Performance for HPC and AI | 50% more performance over Neoverse N1 | Dual 256-bit SVE vector units; vector-length agnostic SVE | HPC workloads, vector-intensive applications |

| Neoverse N3 | Armv9.2-A | Optimized for performance-per-watt | 20% better performance-per-watt vs N2; nearly 3× ML performance vs N2 | Scales from 8–192 cores and beyond (with Neoverse S3 platform IP) | Hyperscale cloud, telecom infrastructure, networking, edge |

| Neoverse N2 | Armv9 | Balanced performance and efficiency | 40% IPC uplift vs N1 while maintaining strong power efficiency | First Armv9 infrastructure CPU; SVE2 and MTE support | Scale-out cloud, cloud-native workloads, network infrastructure |

| Neoverse N1 | Armv8.2-A | Efficient cloud-native compute | 40%+ price-performance and TCO gains reported by partners | Cloud-optimized design for compute density; widely adopted in cloud instances | Cloud-native workloads, hyperscale cloud |

Arm Neoverse in action

Arm Neoverse is a foundational CPU platform across modern cloud and AI infrastructure, powering systems deployed by major hyperscalers and forming the compute backbone of converged AI data centers.

- NVIDIA Grace and Vera Rubin platforms: NVIDIA’s Grace CPU Superchip is built on Arm Neoverse V2 with high-bandwidth memory to support AI and HPC pipelines. Meanwhile, the Vera Rubin platform extends this model toward next-generation AI systems, including agentic reasoning workloads, and reinforces CPUs role in managing increasingly complex AI infrastructures.

- AWS Graviton4 and Graviton 5: AWS Graviton4 processors built on Arm Neoverse V2, powers general-purpose cloud workloads such as databases, web applications, analytics, and machine learning workloads. Graviton5, powered by Neoverse V3, advances this foundation in the converged AI data center, supporting both large-scale AI inference and traditional cloud services within the same infrastructure support.

- Microsoft Azure Cobalt 100 and 200: Azure Cobalt 100, based on Neoverse CSS N2, targets scale-out cloud-native workloads across infrastructure services and distributed applications. Meanwhile, the Cobalt 200, built on Neoverse CSS V3, extends performance density and energy efficiency for next-generation cloud environments.

- Google Axion: Axion processors power general-purpose cloud workloads including web and application servers, open-source databases, analytics engines, containerized services, and CPU-based AI inferencing. This results in enabling a balanced compute for cloud-native and AI-adjacent workloads within the same infrastructure footprint.

The overall takeaway is that the Arm Neoverse V-series is built for maximum performance in cloud and AI-driven data centers, where scaling compute and strengthening security go hand in hand. The N-series, meanwhile, focuses on efficient scale, delivering strong performance-per-watt for cloud-native and telecom workloads.

What is Arm Neoverse CSS?

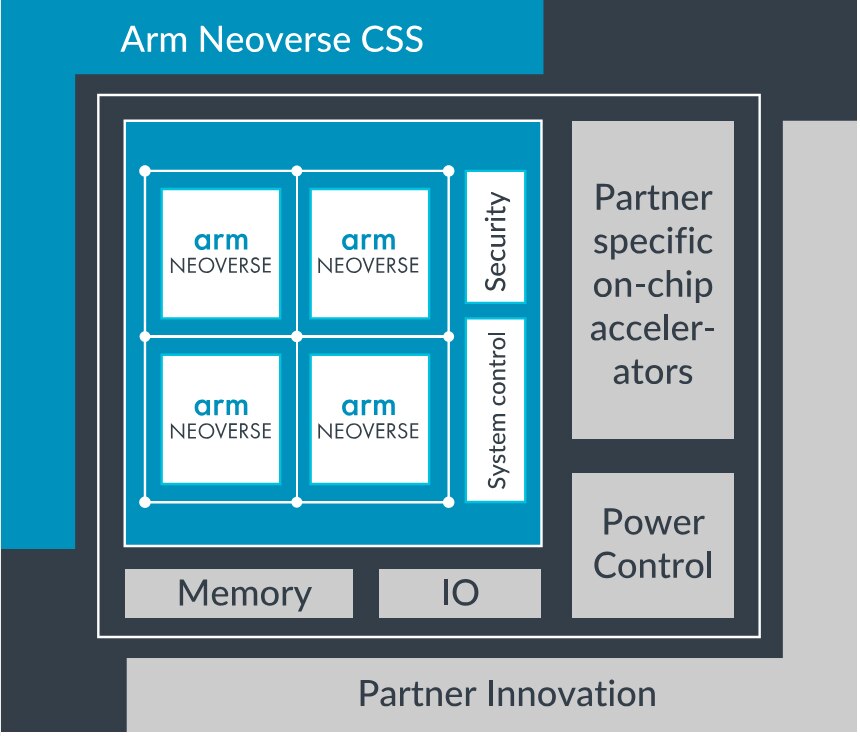

While Arm Neoverse defines the CPU cores that power modern infrastructure, Arm Neoverse Compute Subsystems (CSS) extends that foundation into pre-integrated, validated infrastructure platforms.

Neoverse CSS integrates Neoverse CPU cores with interconnect, memory subsystems, and system IP into a coherent, pre-validated compute platform. Rather than assembling these components independently, partners can build on a tested foundation and focus on workload-specific differentiation.

In practical terms, this reduces system-level validation effort and shortens the time required to bring custom infrastructure silicon to market. It provides a scalable path from architectural design to deployable cloud, AI, telecom, and edge processors.

Where Neoverse cores define performance and efficiency profiles, Neoverse CSS defines how those capabilities are delivered in production systems.

Introducing Arm AGI CPU

The evolution of Neoverse continues with the introduction of the Arm AGI CPU, a new class of production-ready silicon built on the Neoverse platform. Designed to power the next generation of AI infrastructure, it reflects the broader shift towards agentic AI workloads that demand sustained performance at massive scale.

Arm AGI CPU builds directly on the strengths that have made Neoverse the foundation of today’s leading cloud platforms. By extending the Arm compute platform beyond IP and CSS and delivering silicon, Arm is giving customers greater choice in how they deploy Arm compute, while reflecting growing demand from the ecosystem for production-ready Arm platforms that can be deployed at scale.

The infrastructure foundation for what comes next

When we talk about AI infrastructure, accelerators often dominate the spotlight, and yet the CPU remains fundamental. It coordinates accelerators, orchestrates tasks, process data, manages memory hierarchies, enforces multi-tenant security, and supports general-purpose compute alongside specialized AI pipelines. As infrastructure scales across cloud and edge environments, the need for a balanced, efficient, and secure CPU foundation becomes more pronounced.

Arm Neoverse is built around that responsibility, aligning architectural design with the realities of cloud, AI, networking, and edge deployments, and reinforces the CPU’s role as the control and coordination layer of modern infrastructure. And as the compute architectures diversify, the infrastructure CPU remains the foundation that enables them to operate cohesively.

Any re-use permitted for informational and non-commercial or personal use only.